How I Chained Two Codex /goal Runs to Build a Complete CLI Tool

One paragraph describing a CLI idea.

Two /goal commands. Eighty minutes of letting the machine work.

A complete CLI tool at the end — 35 tests passing across 6 files, build green, typecheck green, every acceptance criterion mapped to evidence.

Last week’s post showed /goal building a single WordPress plugin in 28 minutes. One goal, one feature, one walk-away-and-come-back. That was the proof of concept.

This time: two goals, chained. The first goal built the MVP command surface in 32 minutes. The second added OAuth authentication in 47 minutes. Both ran fully autonomously while I was doing something else.

The vehicle for this build is one you might recognize — the same Reddit summarizer I built back in March using the 6-step Workflow Engineering process. That version was an Express server with REST endpoints and active supervision throughout. It worked. But every time I wanted an AI agent to pull Reddit data, it had to start the server, make HTTP calls, parse responses — burning tokens on ceremony. A CLI tool that an agent can invoke directly from the command line, get structured JSON back, and move on? That consumes a fraction of the tokens and saves real money on Claude and Codex subscriptions.

So I rebuilt it.

Back in March, building that summarizer required active supervision across six steps — spec brainstorm, review, test plan, implementation plan, execute, test. This time: two skill invocations and two /goal commands, with less than ten minutes of human input across the whole thing.

The codex goal command scales beyond single features.

When a project is too big for one goal, you slice it — and the skill can help you find the seams.

.

.

.

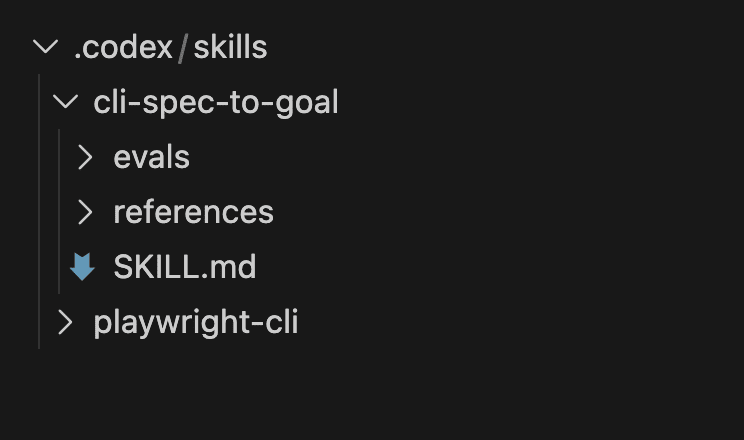

Meet cli-spec-to-goal — The Skill That Splits

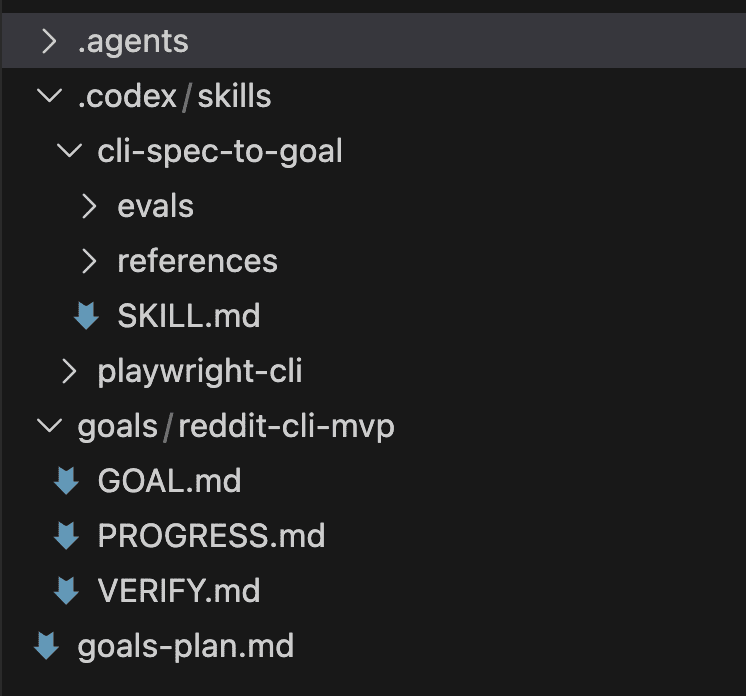

Last week’s post introduced wp-spec-to-goal, a Codex Agent skill that turns a vague paragraph into the GOAL.md / VERIFY.md / PROGRESS.md trio that Codex needs to finish a goal autonomously. That skill was designed for WordPress plugins.

cli-spec-to-goal is its counterpart for CLI tools.

Same core workflow — take a vague idea, ask focused questions, produce the goal bundle plus an optional project scaffold. One key difference sets it apart.

Automatic complexity detection.

The WP skill always produced one goal.

The CLI skill inspects the spec and judges whether the project fits in a single goal or should be split into multiple slices. When it decides to split, it writes a goals-plan.md at the project root with numbered slices and generates the first goal bundle only — leaving the rest for later invocations.

(That split detection turned out to be the most interesting part of the whole build. More on that in a moment.)

Every goal it generates includes six AI-agent-friendly patterns: --json output mode, stdout/stderr separation, TTY detection, meaningful exit codes, structured errors, and --dry-run previews. These patterns make the resulting CLI safe for AI agents to invoke directly. The repo has the full list in the skill’s reference templates.

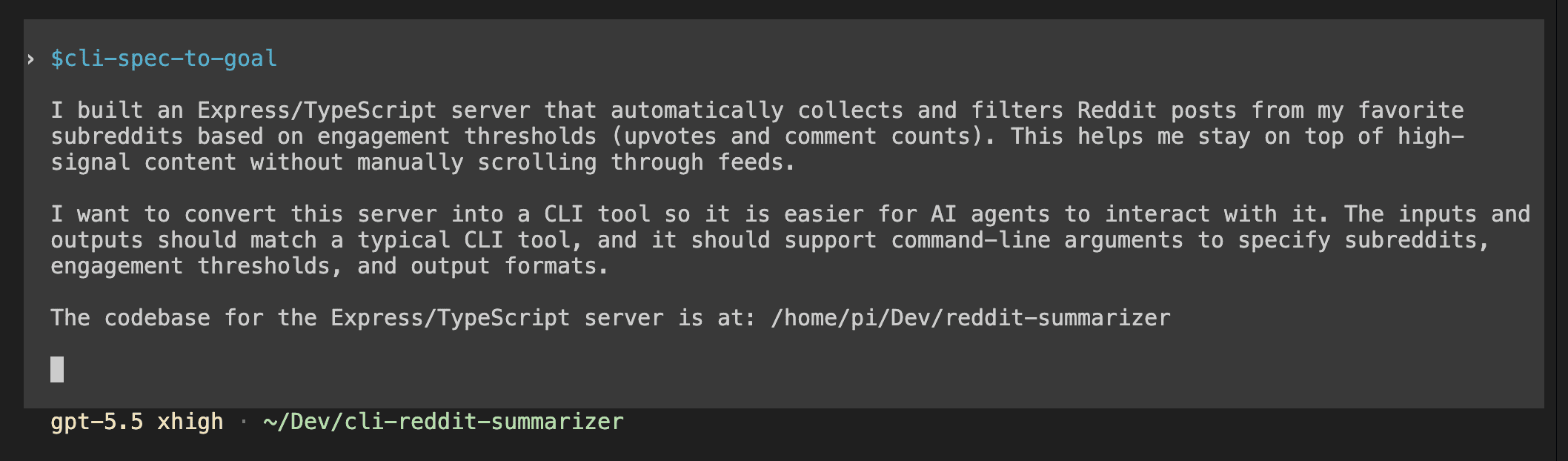

For the invocation, I typed one paragraph.

It described what the existing Express server does, said I wanted a CLI version that’s easier for AI agents to interact with, and pointed at the server codebase as a read-only reference.

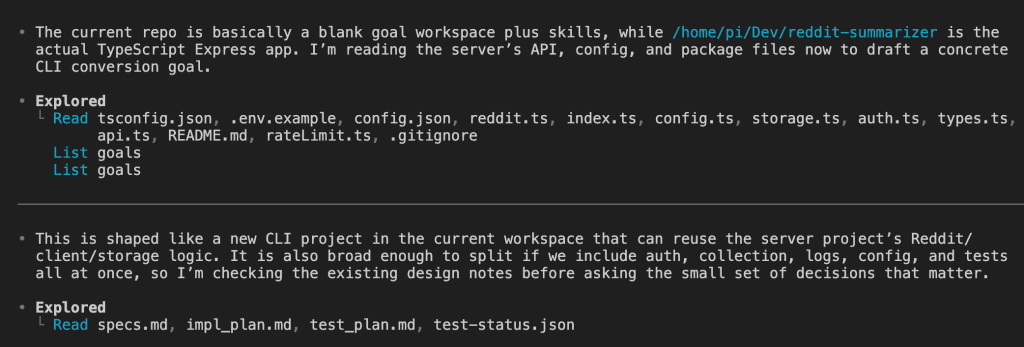

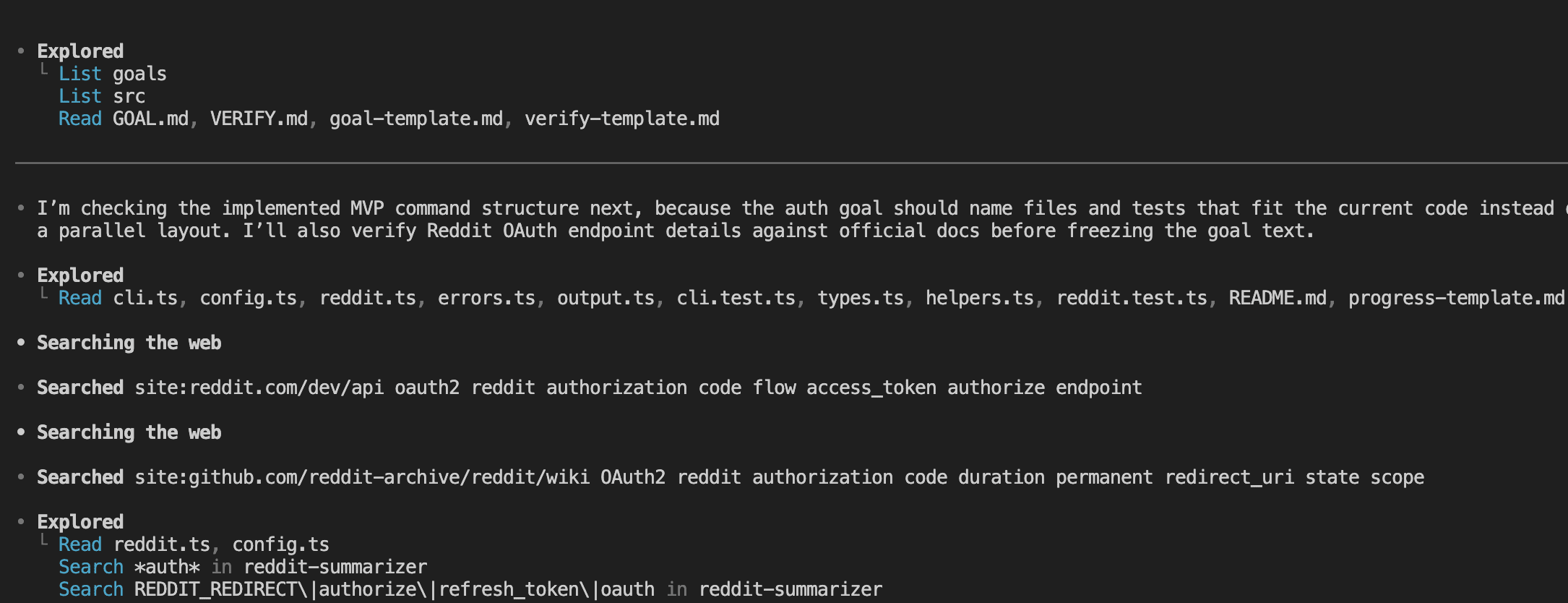

The skill probed both repos before asking anything.

It read the empty target repo, then explored the Express server’s source files — config, Reddit API client, storage patterns, route handlers, rate limiting, test structure. It identified this as a project broad enough to warrant splitting.

.

.

.

The Split Decision

This is the moment the skill earned its keep.

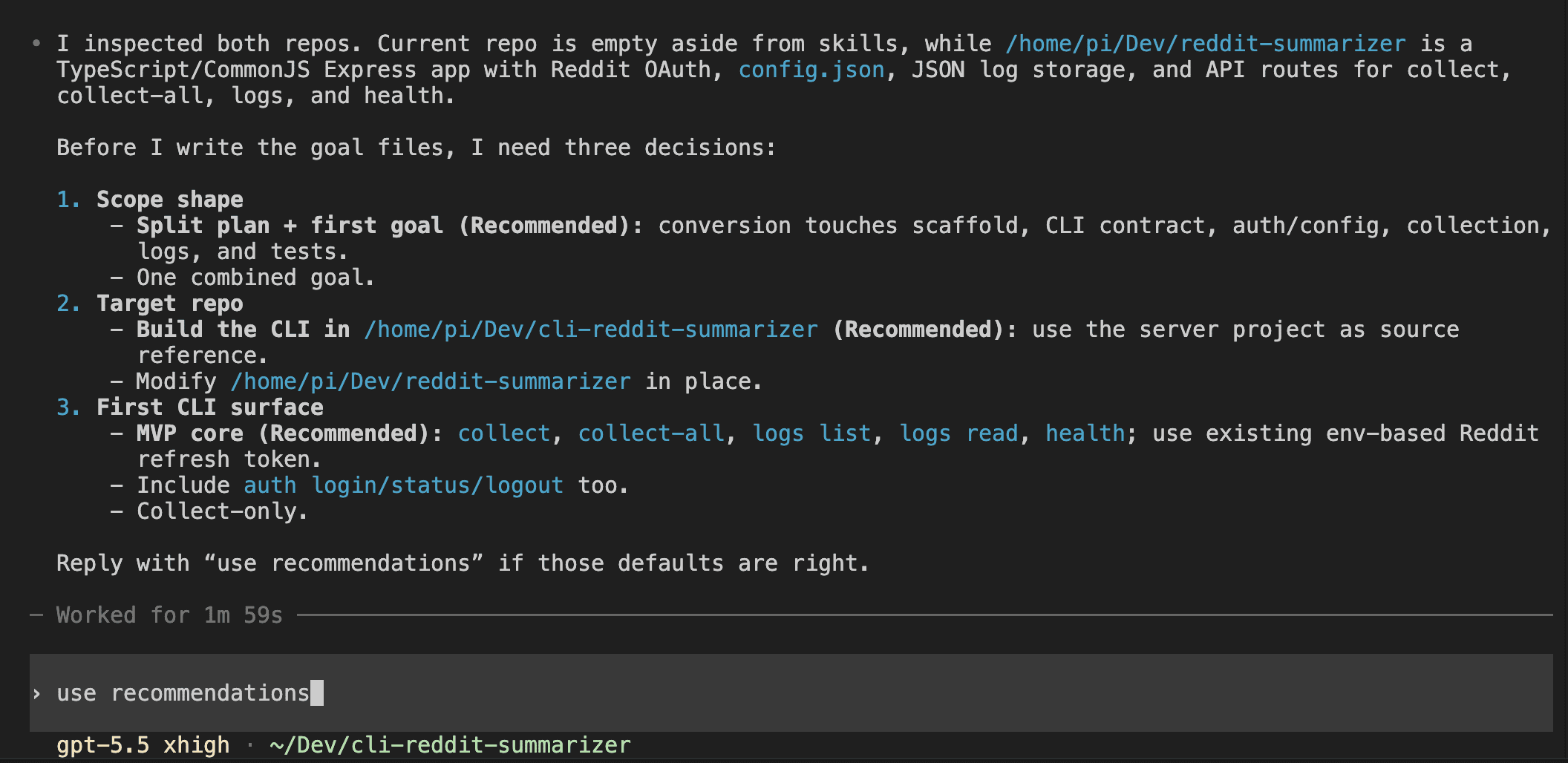

After probing both repos — the empty target and the existing Express server — it came back with three decisions to confirm:

- Scope shape: Split plan + first goal (recommended) vs. one combined goal

- Target repo: Build the CLI in the new repo, using the server as a read-only source reference

- First CLI surface: MVP core commands —

collect,collect-all,logs list,logs read,health— using an existing env-based refresh token. No OAuth login in the first slice.

I replied “use recommendations” and the skill started writing.

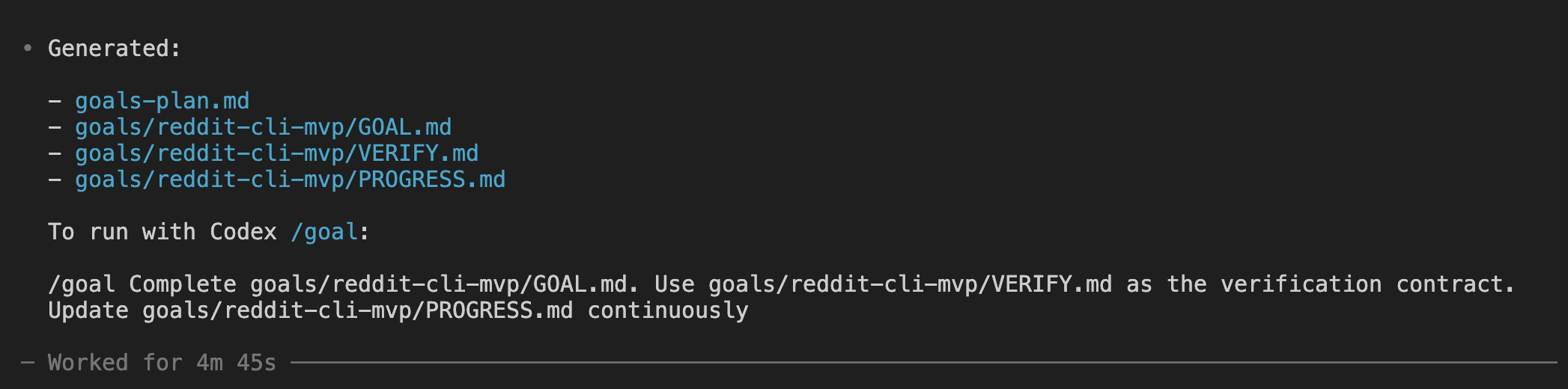

It pulled its reference templates — goal, verify, and progress — and began generating. Two and a half minutes of file creation later, here’s what appeared:

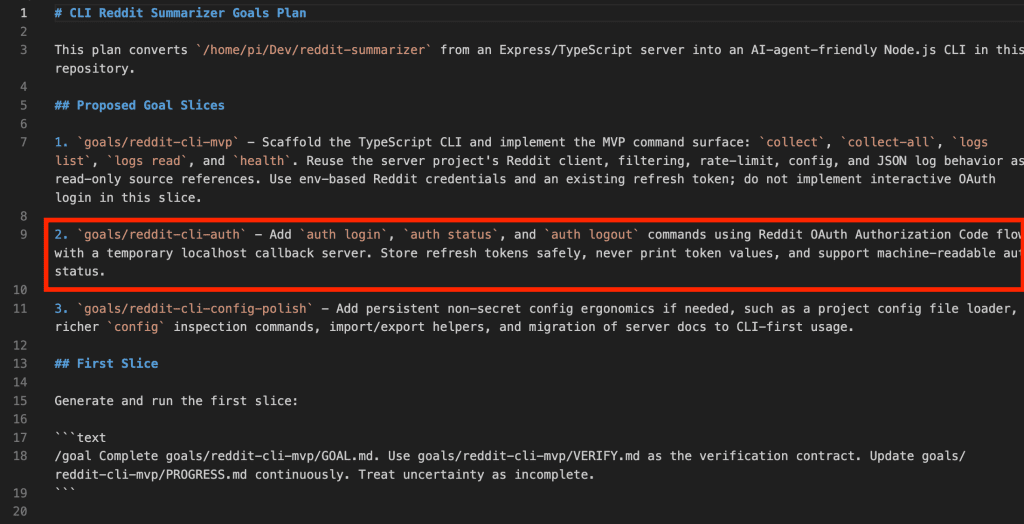

A goals-plan.md listing three proposed goal slices (MVP, auth, config polish).

A complete goal trio for the first slice: GOAL.md with 4 user stories and 15 acceptance criteria, VERIFY.md with binary smoke checks, functional checks, exit code checks, and integration checks, and a skeleton PROGRESS.md ready for Codex to fill in during the goal run.

It also produced the exact /goal command to paste — tailored to the file paths it had just created.

Total time from invocation to handoff: 4 minutes 45 seconds.

.

.

.

Goal 1: The MVP (32 Minutes)

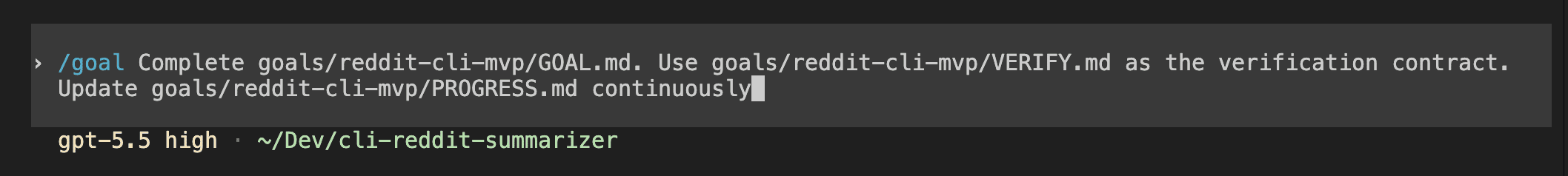

The handoff: paste the /goal command, press enter, walk away.

/goal Complete goals/reddit-cli-mvp/GOAL.md. Use goals/reddit-cli-mvp/VERIFY.md

as the verification contract. Update goals/reddit-cli-mvp/PROGRESS.md continuously.

Treat uncertainty as incomplete.

Short command.

Heavy lifting lives in the files the skill already wrote.

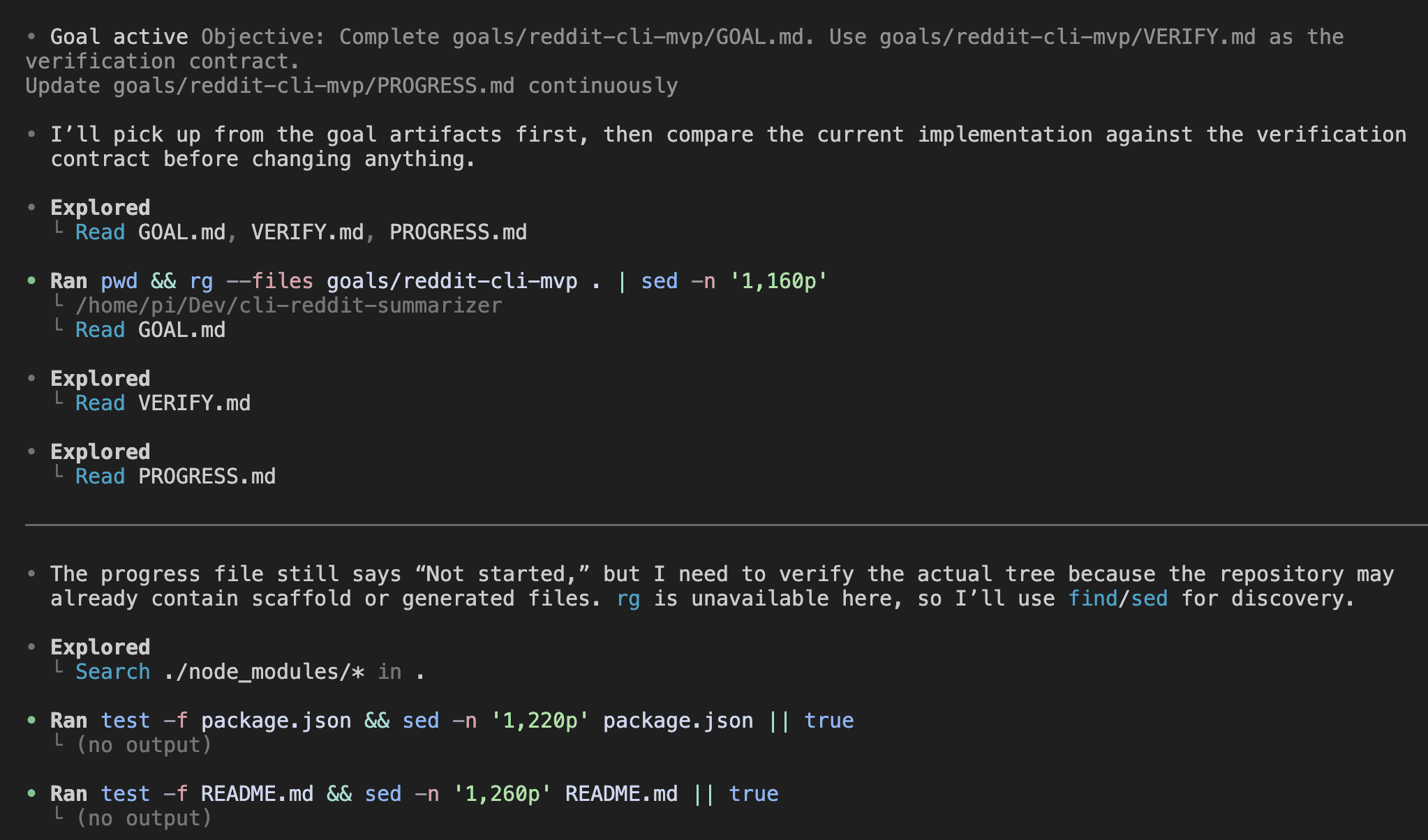

Codex activated the goal.

It read GOAL.md, VERIFY.md, and PROGRESS.md, inspected the current repo state, checked for any existing scaffold or generated files, and started implementing. It used the Express server source as a read-only reference — pulling the Reddit client patterns, filtering logic, and JSON log structure — while building the TypeScript CLI from scratch.

Then the black box.

I left. Codex worked.

I went to make coffee. Checked YouTube while it brewed. The Codex session kept running through file edits, test runs, and self-audits in the background. The session was busy. I was elsewhere.

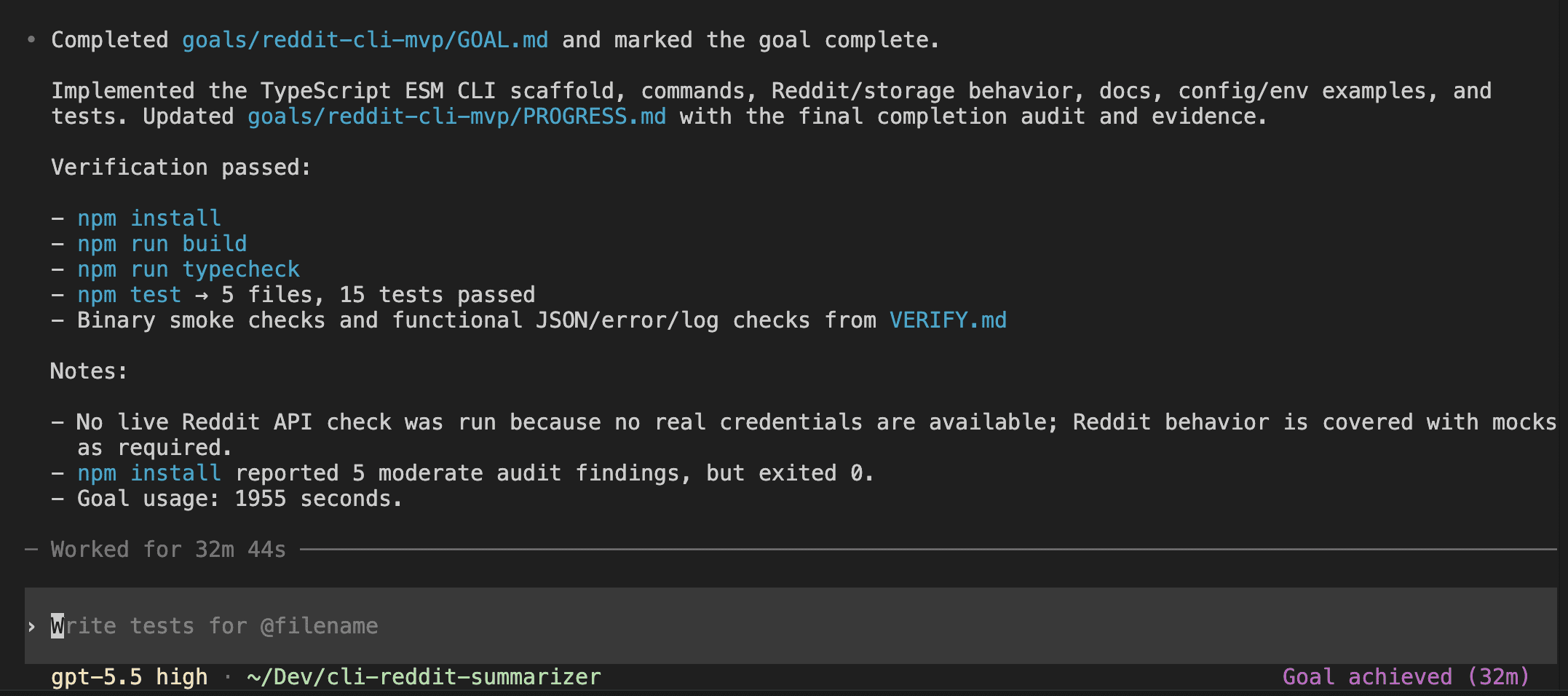

32 minutes and 44 seconds later, the goal was marked complete.

What shipped:

- The full TypeScript ESM CLI scaffold with Commander.js,

- 5 commands (

collect,collect-all,logs list,logs read,health), - 26 source files,

- 5 test files with 15 tests — all passing.

npm install,npm run build,npm run typecheck, andnpm testall green. Binary smoke checks and functional JSON/error/log checks from VERIFY.md all passed.

If you read last week’s post, this shape should look familiar. The spec defines the boundaries, the continuation prompt refuses to declare victory without evidence, and you trust the result because the audit trail is sitting right there in PROGRESS.md.

One honest note from the completion summary: no live Reddit API check was run, because no real credentials were available in the build environment. Tests used mocks, as required by the verification contract. Codex noted this explicitly — the kind of transparency you want from an autonomous run.

.

.

.

Goal 2: OAuth Auth (47 Minutes, Same Pattern)

The MVP left a deliberate gap.

It required an existing REDDIT_REFRESH_TOKEN in .env to call the Reddit API — functional for a developer who already has credentials, but no way to acquire them through the tool itself. The goals-plan.md had already named the next slice: reddit-cli-auth.

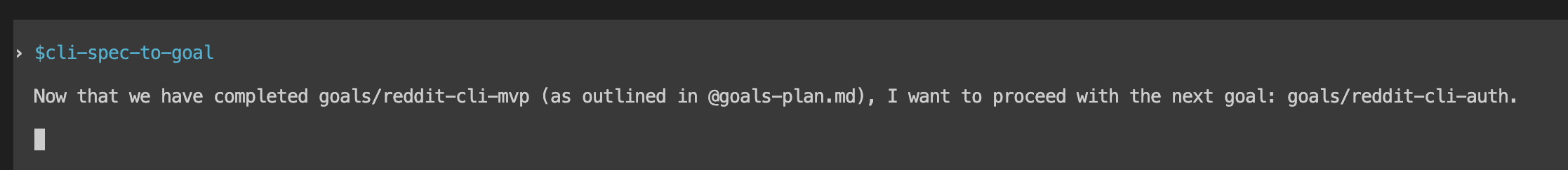

I invoked the skill again, this time with one sentence: “Now that we have completed goals/reddit-cli-mvp, I want to proceed with the next goal: goals/reddit-cli-auth.”

The skill scanned the now-implemented codebase.

The repo that was empty an hour ago now had 26 source files, a working test suite, and a complete CLI structure. It read the existing Commander setup, Vitest test patterns, error handling conventions, and .env configuration. It also searched Reddit’s OAuth2 documentation to verify endpoint details and token flow specifics.

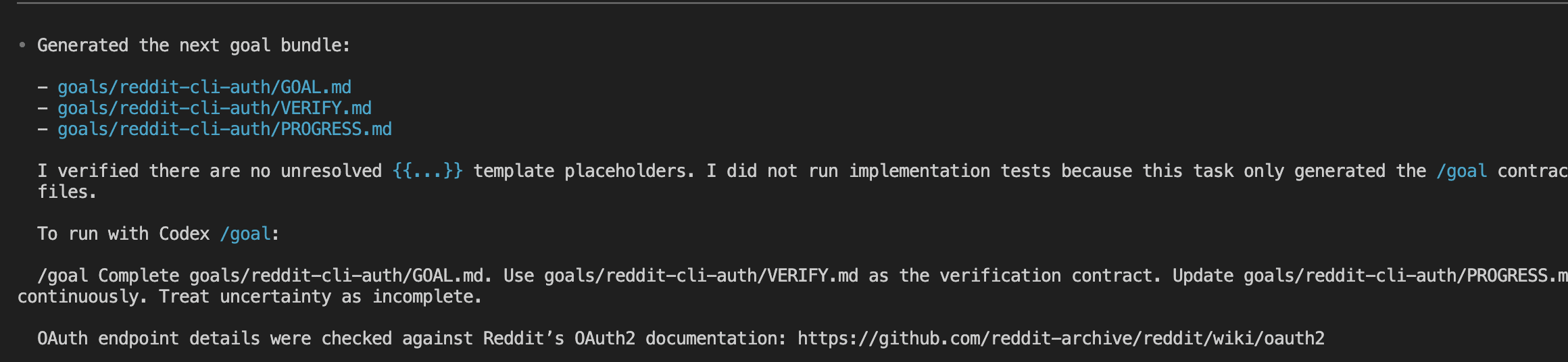

The GOAL.md it produced fit into the existing codebase. It referenced the same Commander program, the same test framework, the same error types, and the same .env storage approach. The skill verified there were no unresolved template placeholders, confirmed it had only generated the /goal contract files (no implementation), and cross-checked OAuth endpoint details against Reddit’s official documentation.

Paste the second /goal command. Press enter. Walk away again.

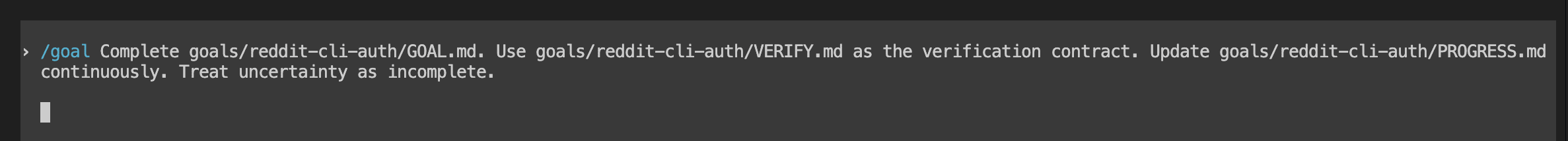

/goal Complete goals/reddit-cli-auth/GOAL.md. Use goals/reddit-cli-auth/VERIFY.md

as the verification contract. Update goals/reddit-cli-auth/PROGRESS.md continuously.

Treat uncertainty as incomplete.

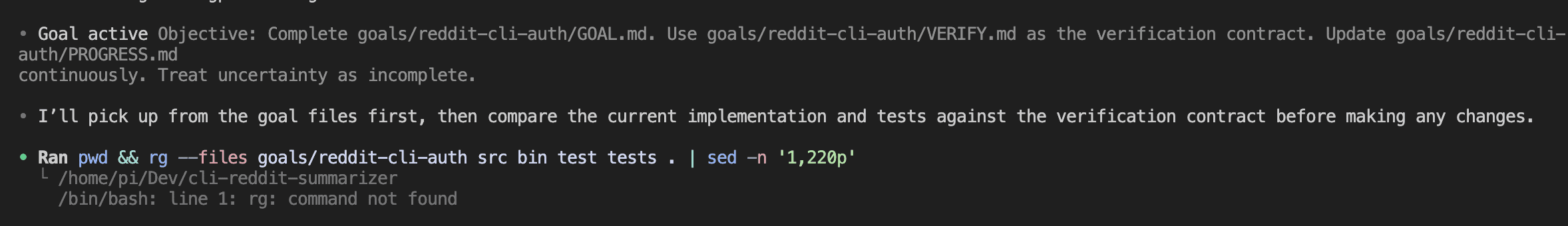

Codex activated the second goal.

Same startup pattern — read the goal trio, explore the repo, compare current implementation against the verification contract, build a plan.

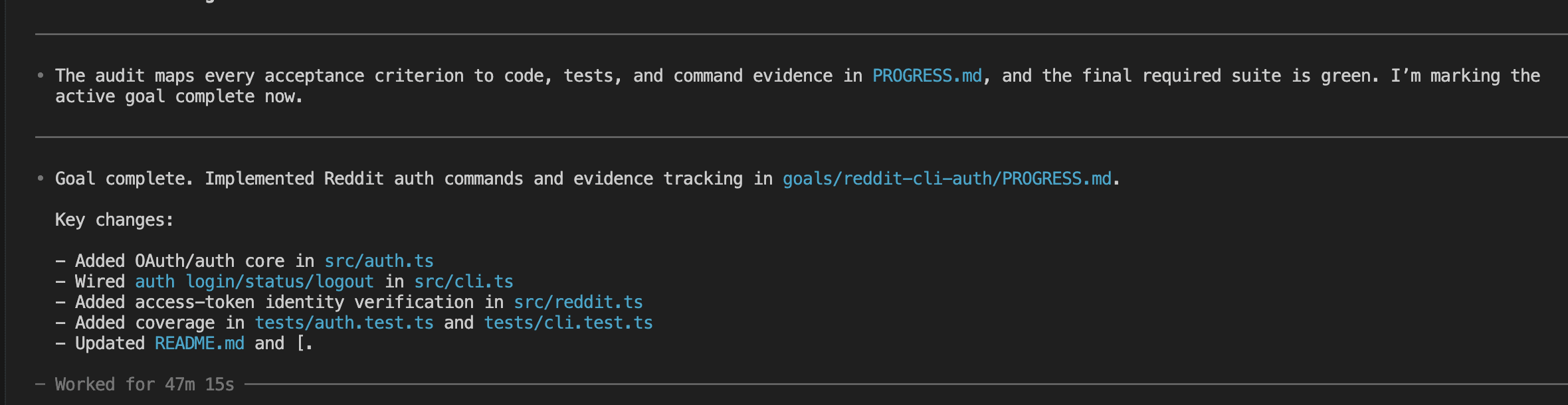

47 minutes and 15 seconds later…

auth login (full OAuth Authorization Code flow with a temporary localhost callback server), auth status (machine-readable token and identity info), and auth logout (with optional --revoke flag to invalidate the token server-side) — all wired into the existing CLI. 35 total tests across 6 files. Build, typecheck, and test all green.

An interesting wrinkle surfaced during this run.

The second time I pasted a /goal command and walked away, it felt… ordinary. The novelty was gone. I didn’t hover over the terminal wondering if it would work. I just left.

That’s the point. When the second walk-away feels routine, the pattern has landed.

.

.

.

Does It Actually Work?

Same instinct as the WP post: close the terminal and test it like a real user.

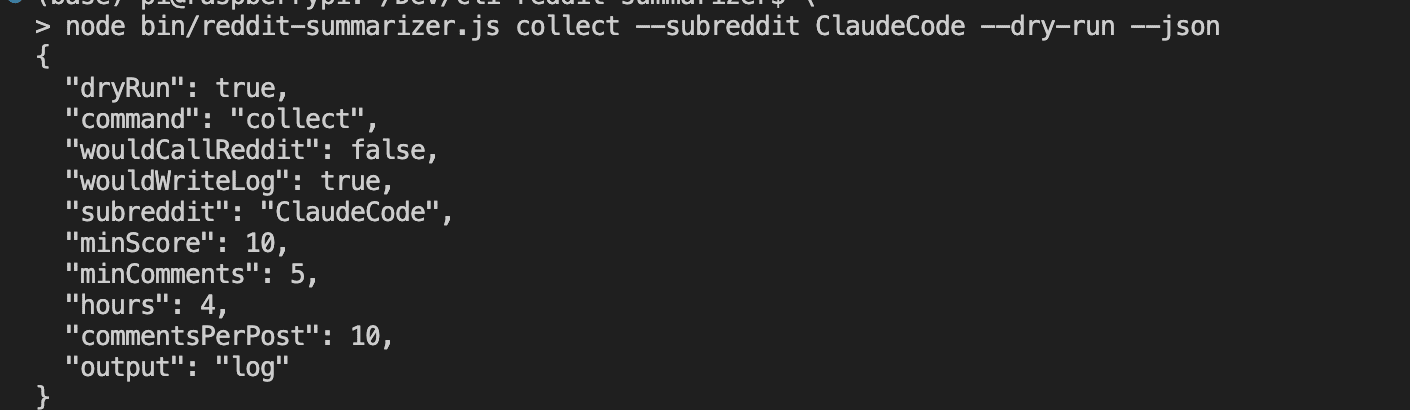

First, the dry run:

node bin/reddit-summarizer.js collect --subreddit ClaudeCode --dry-run --json

Clean JSON showing exactly what would happen without calling Reddit or writing files. dryRun: true, wouldCallReddit: false, wouldWriteLog: true. This is the --dry-run pattern in action — an AI agent invoking this CLI can preview any command before committing to side effects.

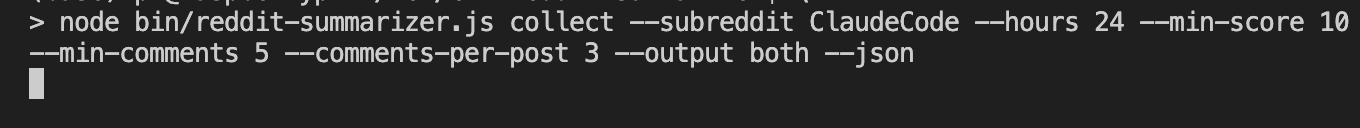

Then the real run:

node bin/reddit-summarizer.js collect --subreddit ClaudeCode --hours 24 \

--min-score 10 --min-comments 5 --comments-per-post 3 --output both --json

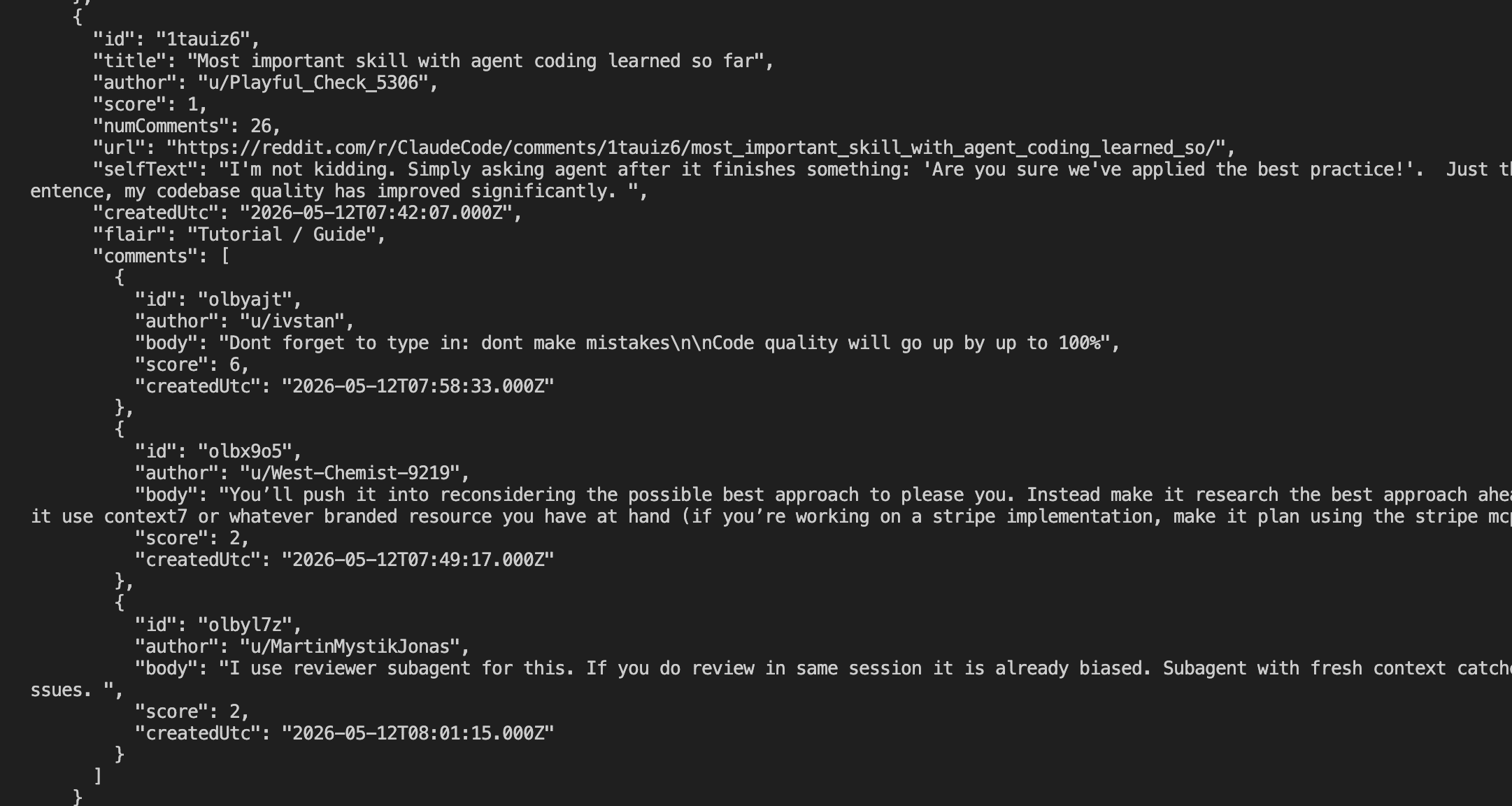

Real Reddit data came back. Posts from r/ClaudeCode with titles, authors, scores, comment counts, URLs, flairs, timestamps, and threaded comment data — machine-parseable JSON that any agent can consume directly.

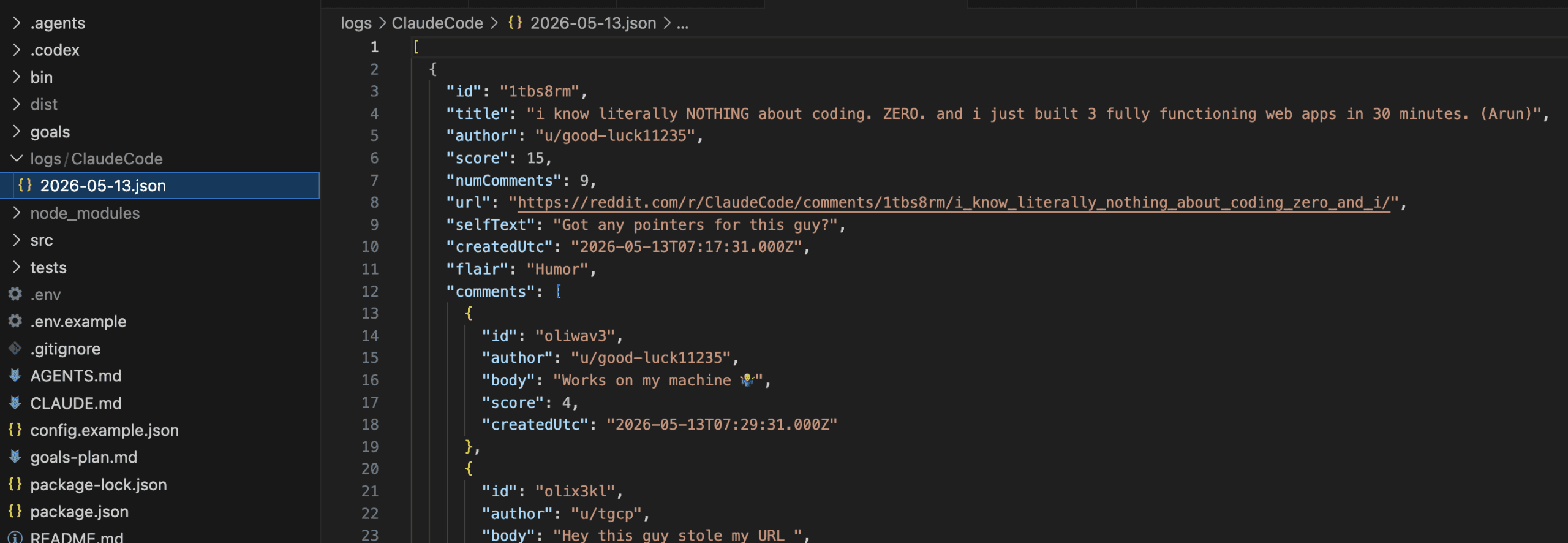

The log file landed exactly where GOAL.md said it would — logs/ClaudeCode/2026-05-13.json — with the same structured data persisted to disk for downstream processing.

Two autonomous goal runs.

Zero manual coding.

The tool works against a live API.

The dry-run test proves the agent-safety patterns work.

The live run proves the Reddit integration works.

.

.

.

When to Split (And When Not To)

The skill detected the split automatically, but the heuristic is learnable.

Here’s when you should slice a project into multiple codex goal command runs:

Split when:

- The project has more than ~5 acceptance criteria spanning unrelated concerns

- There’s a natural “core first, then extensions” shape — MVP then auth, core then plugins

- One slice needs credentials or setup that another doesn’t (OAuth login needs Reddit app registration; the MVP only needs an existing token)

- The total scope would exhaust a single goal’s token budget

Keep as one goal when:

- Everything shares the same test fixtures and setup

- The feature is a single vertical slice — one user story, 3-5 acceptance criteria

- Splitting would create artificial boundaries that increase integration risk

Here’s how goals-plan.md ties it all together:

- number your slices, give each a one-line description, and generate one goal at a time.

- Run them sequentially.

- Each

/goalrun inherits the codebase state from the previous one — the skill detects what already exists and generates goals that fit into the structure that’s already there.

.

.

.

The Bigger Picture

The pattern is repeating.

Last week showed the codex goal command with wp-spec-to-goal for a WordPress plugin. This post shows it with cli-spec-to-goal for a CLI tool. Skill generates spec, /goal executes spec, PROGRESS.md proves it. The domain changed, the workflow stayed the same.

Goal chaining is the multiplier.

One goal proved the concept. Two goals proved it scales. Each goal run inherits the full context of what was built before, because the codebase itself is the shared state. No context window to manage between goals — just files on disk.

And here’s the full circle.

The Reddit summarizer started as a 6-step Workflow Engineering build in March — spec brainstorm, review, test plan, implementation plan, execute, test — with active supervision at every step. The same project, rebuilt as a CLI, took two skill invocations and two /goal commands. The human work was describing what to build. The machine work was everything else.

The repo is public at github.com/nathanonn/cli-reddit-summarizer. Inspect the actual GOAL.md, VERIFY.md, and PROGRESS.md files for both goals. Grab the cli-spec-to-goal skill from .codex/skills/ if you build CLI tools.

Start thinking about your next project in terms of goal slices.

Leave a Comment